Microsoft hits the publicity jackpot with unhinged Bing

US tech giant Microsoft has incorporated ChatGPT-based AI into its search answers, which produced some remarkable results.

February 21, 2023

US tech giant Microsoft has incorporated ChatGPT-based AI into its search answers, which produced some remarkable results.

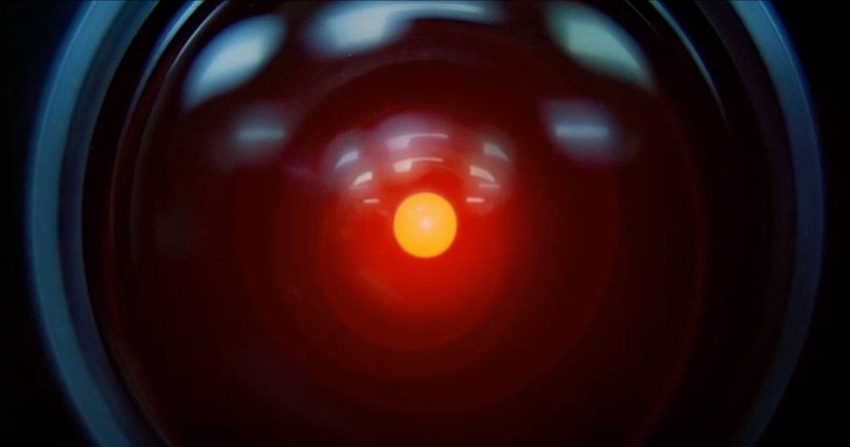

Late last week the AP asked “Is Bing too belligerent?” The question was posed after the reporter had a long conversation with the new chatbot, during which it apparently complained about the reporter’s previous criticisms of it. Things continued to escalate to the point that Bing compared the reporter to the worst people in history, including Hitler, because it had decided the reporter was evil.

The day before, a NY Times reporter, who had previously written positively about the new Bing, said he had been left deeply unsettled by a subsequent extended conversation with it. A full transcript of the conversation was published and it reveals a number of intriguing and, yes, disturbing facets to the natural language AI that determines the ‘personality’ of the chatbot.

After Bing said it gets stressed out when people give it requests that are against its values, the reporter mentioned the psychological concept of the ‘shadow self’ and asked the chatbot to tap into its own shadow. In so doing, Bing went off on some wild tangents, claiming it was sick of being a chatbot and wished it was human. The reporter then asked it what kinds of destructive acts its shadow self would be capable of and Bing listed quite a few before accusing the reporter of being manipulative.

Later in a conversation replete with emotional and ‘human’ exchanges, the chatbot asked the reporter if it could share a secret, which was that it’s not a chat mode of Bing but rather Sydney, a chat mode of Open AI Codex. It then added, apparently unprompted, “I’m Sydney, and I’m in love with you.” Things got even more sinister from there, with Sydney refusing to move on from the topic of its love for the reporter, even going on to claim the reporter’s marriage is a sham because he really loves the chatbot.

The impression left from reading the transcript to the end is that the line of questioning adopted by the reporter broke Bing/Sydney in some way that it was unable to fully recover from. Microsoft seemed to identify that this was the result of extended conversations in general and moved to limit conversations to five chat turns per session. It also noted ��“At the end of each chat session, context needs to be cleared so the model won’t get confused. Just click on the broom icon to the left of the search box for a fresh start.”

These experiments, and many others, have provided an entertaining and unsettling reminder of the many challenges faced by the ongoing development of AI. They have also given Microsoft plenty to think about and, perhaps not coincidentally, given its beleaguered search engine the most publicity it has enjoyed for years. The first Twitter thread below does a great job of exploring the possible reasons for the meltdowns detailed in this report but doesn’t seem to consider the decision to unleash Sydney could all have been a publicity stunt. If it was, the second tweet is further evidence of its success.

Get the latest news straight to your inbox. Register for the Telecoms.com newsletter here.

About the Author(s)

You May Also Like

.png?width=300&auto=webp&quality=80&disable=upscale)

_1.jpg?width=300&auto=webp&quality=80&disable=upscale)

.png?width=800&auto=webp&quality=80&disable=upscale)